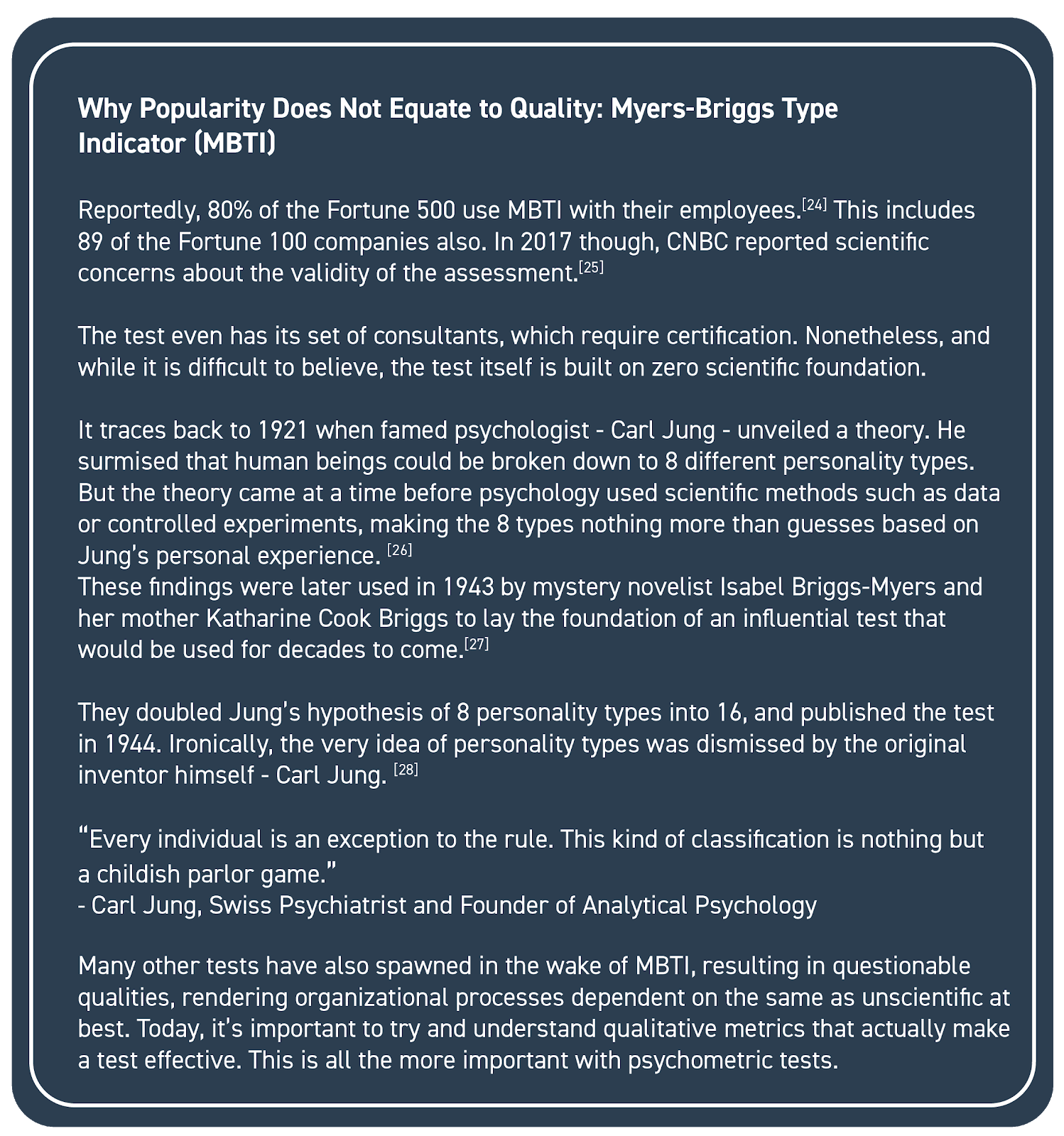

Understanding the Law:

HR generalists, specialists or organizational influencers are often advised to maintain legal compliance with the addition of psychometric tests to organizational processes. Anti-discrimination laws require – especially cognitive ability tests to remain job-relevant and strongly validated.

A recent example could be traced to the National Football League, an organization that changed its assessment battery due to concerns around racial discrimination and poor job-performance prediction. [29]

This is the Wonderlic Personnel Test, a 12-minute, 50 item questionnaire used by the NFL since the 1970s. Recently, it’s been revealed to have nothing to do with football success with signs of racial bias.

A new test was since devised under Harold Goldstein, a professor of Industrial & Organizational Psychology, and Cyrus Mehri – a Washington lawyer at the helm of the Fritz Pollard Alliance that monitors the NFL’s minority hiring practices. The personality test devised closely resembled the kind firefighters used.

After all, tests are generally required to respect privacy and not endeavor to diagnose candidates.

Understanding Business Needs:

Organizations are known to focus a lot more on the “independent variables” or predictors over what’s being predicted – the “dependent variables.” Consider the following:

i) Purpose:

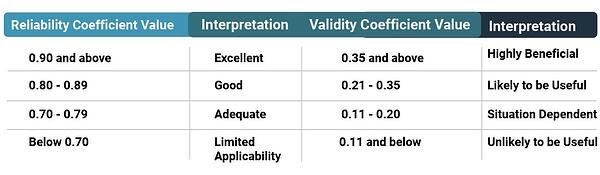

A qualitative test is measured based on validity, and it is essential to ensure that the test being used measures what it is intended to measure. At the same time, an organization must understand the purpose for which they require an assessment before making any selections.

ii) Job Roles:

Psychometric tests are often a combination of different assessments; these combinations are best determined based on job roles. For example, content writers would require an assessment that measures for verbal comprehension, while hard labor would mandate a physical fitness test – both cognitive tests.

iii) Industry:

Understanding industries form an important part of your assessment battery. If you look at sales, even within the same job role, skills and functionality vary depending on product and buyer sophistication.[30] A salesperson selling pens undeniably requires a different set of skills from one that sells IT services.

iv) Geography:

A test developed in India using the Indian population as a standard is remarkably more accurate than one that uses an American norm group. For example, it’s more effective – in context – to use cricket analogies in India against baseball analogies, a sport most Indians are unfamiliar with. Likewise, an American audience scarcely tests well off an Indian standard.

Behavioral Competencies

Behavioral Competencies Cognitive Competencies

Cognitive Competencies Coding Competencies

Coding Competencies Domain Competencies

Domain Competencies

Would you like to comment?