To combat the heighten concerns around how one can prevent selecting candidates who could have possibly taken help of Generative AI tools while attempting tests, we have strengthened our reporting with an additional code quality indicator, namely AI Sense score.

“AI Sense”, an experimental feature, displays a probability score in a candidate’s report, providing an indication of the likelihood that the candidate’s code was generated using AI or AI tools such as ChatGPT, Bard, or GitHub Copilot.

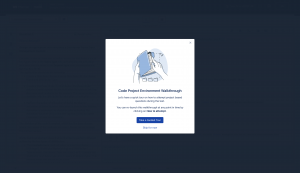

We are happy to share that we have revamped the UI and UX of our Code Project Environment.

The improved UI provides test-takers with larger area for writing codes and viewing the result along with the output on the console.

The new UX brings along a walkthrough, highlighting the key components of the interface, such as full-screen view, language support (IntelliSense), error highlighting and various other editor features to assist test-takers in writing faster and more efficient codes.

We hope you are as excited as we are to let test-takers experience the all-new interface of our Code Project Environment!

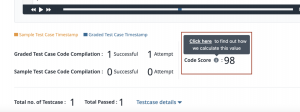

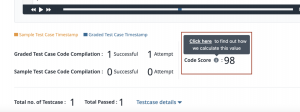

The Code Complexity score in CodeLysis reports has been replaced with a new parameter called Code Score. This new parameter indicates the quality of the code submitted by a candidate by differentiating complex codes from simpler ones.

Code Score uses more precise calculations to provide much more reliable metrics in terms of code quality than its predecessor. This score enables hiring managers to make better and more informed decisions that are not just based on the accuracy of the code written by the candidate.

The calculation of Code Score is supported for 25+ programming languages in CodeLysis as compared to only 5 in Code Complexity.

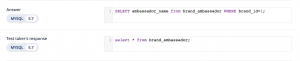

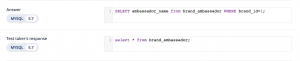

The database variant in which the candidate had attempted a particular database query question will now be visible within the report itself. This improvement is designed to offer users a higher level of transparency and visibility regarding the queries written by the candidate.

Having visibility into the database variant allows users to gauge the candidate’s familiarity and expertise with specific query languages.

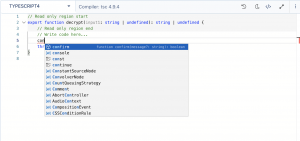

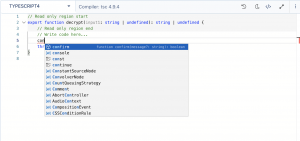

We are happy to announce that we have added support for version 4.9.4 of TypeScript on our general-purpose backend coding environment (CodeLysis).

Clients will be able to select TypeScript4 while creating or editing questions and test takers will be able to attempt coding questions in TypeScript4 wherever available.

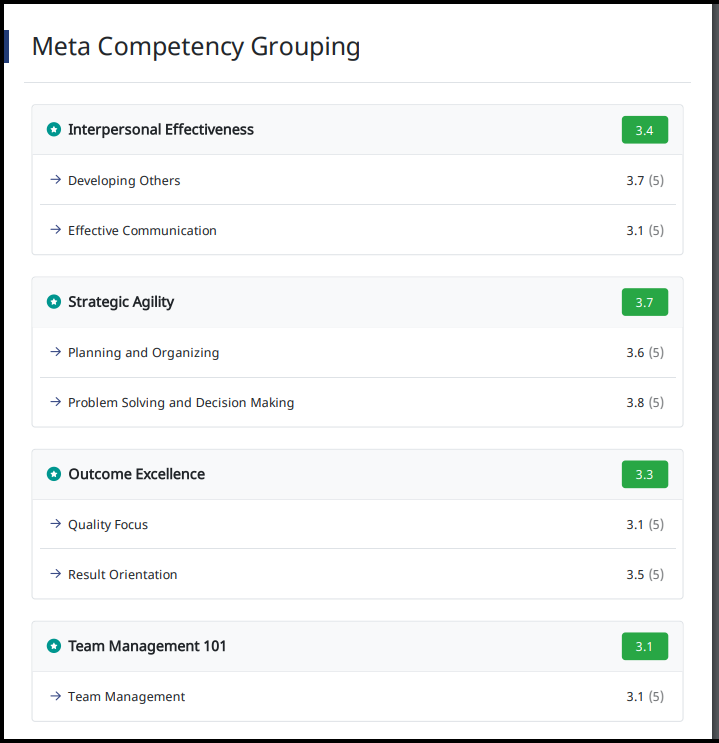

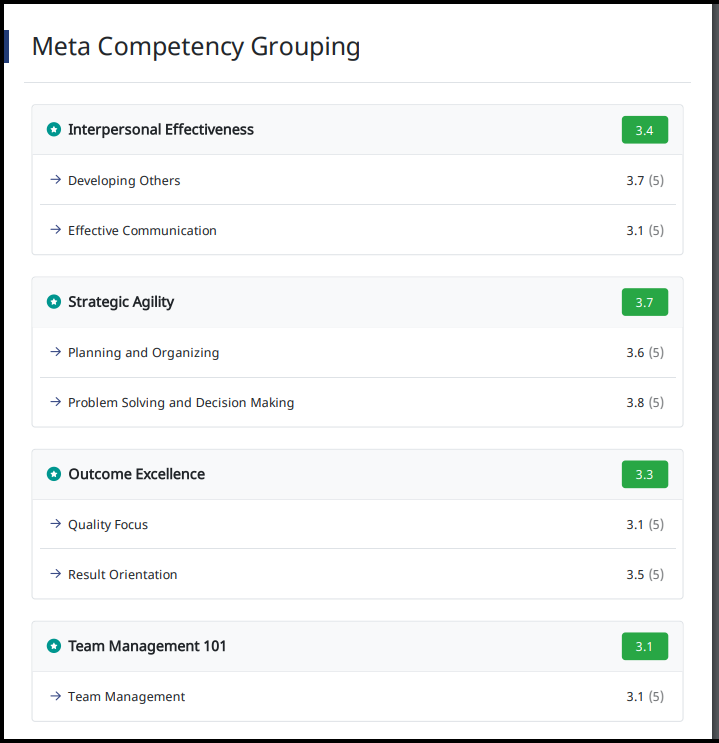

Now, Group Competencies into Sub-competencies for improved report analysis!

All competencies defined for a survey can now be grouped into a Sub-competency framework by grouping them into Meta Competencies. For surveys with large number of Competencies, customers wanted to group competencies to see averages for the Meta Competencies, along with the one already showed for competencies.

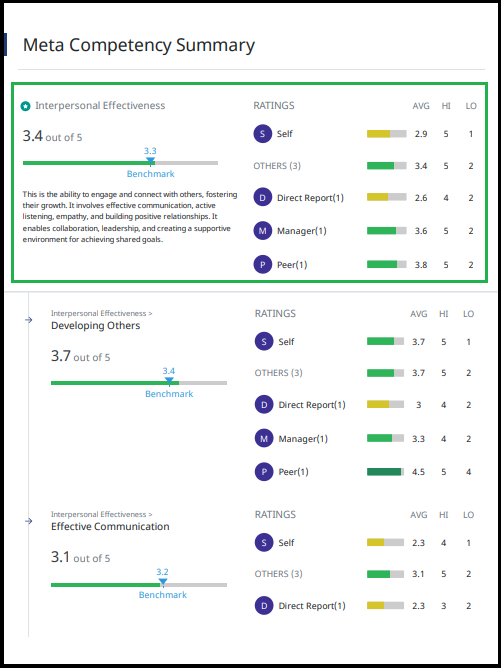

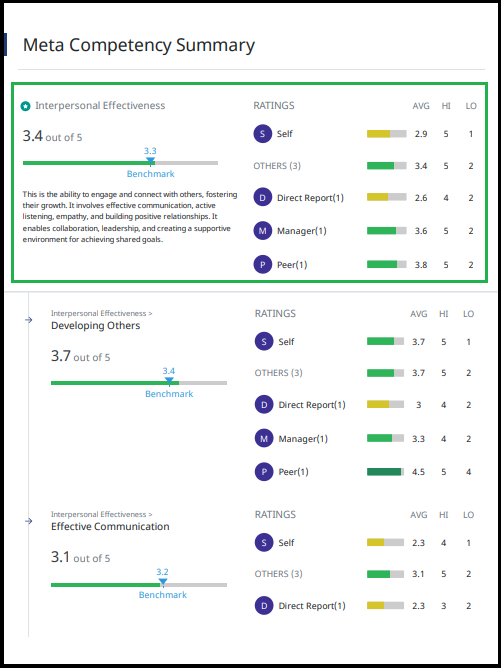

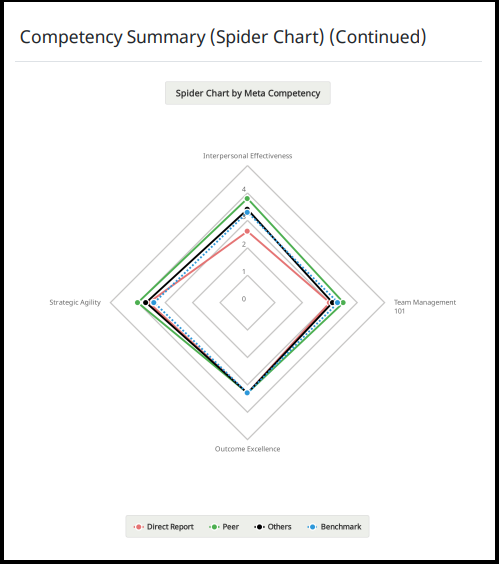

Below are snippets from a sample report that have been used strictly for reference purpose only.

We have introduced the below 3 new sections in the report to support this, namely:

1. Meta Competency Grouping:

Shows how the competencies have been grouped under the Meta Competencies along with the average ratings.

2. Meta Competency Summary:

Shows average ratings per relationship for each Meta Competency. This replaces and adds onto the section for Competency Summary.

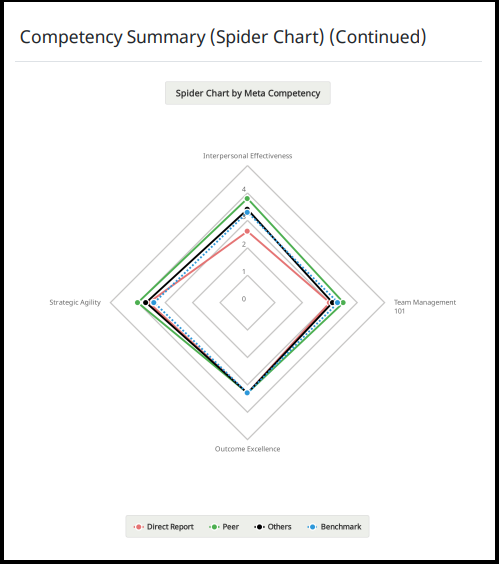

3. Spider Chart by Meta Competency:

Shows a new Spider Chart basis the Meta Competencies apart from the one shown for Individual Competencies.

Data for Meta Competency is also shown in the ‘Data Excel’ of each survey.

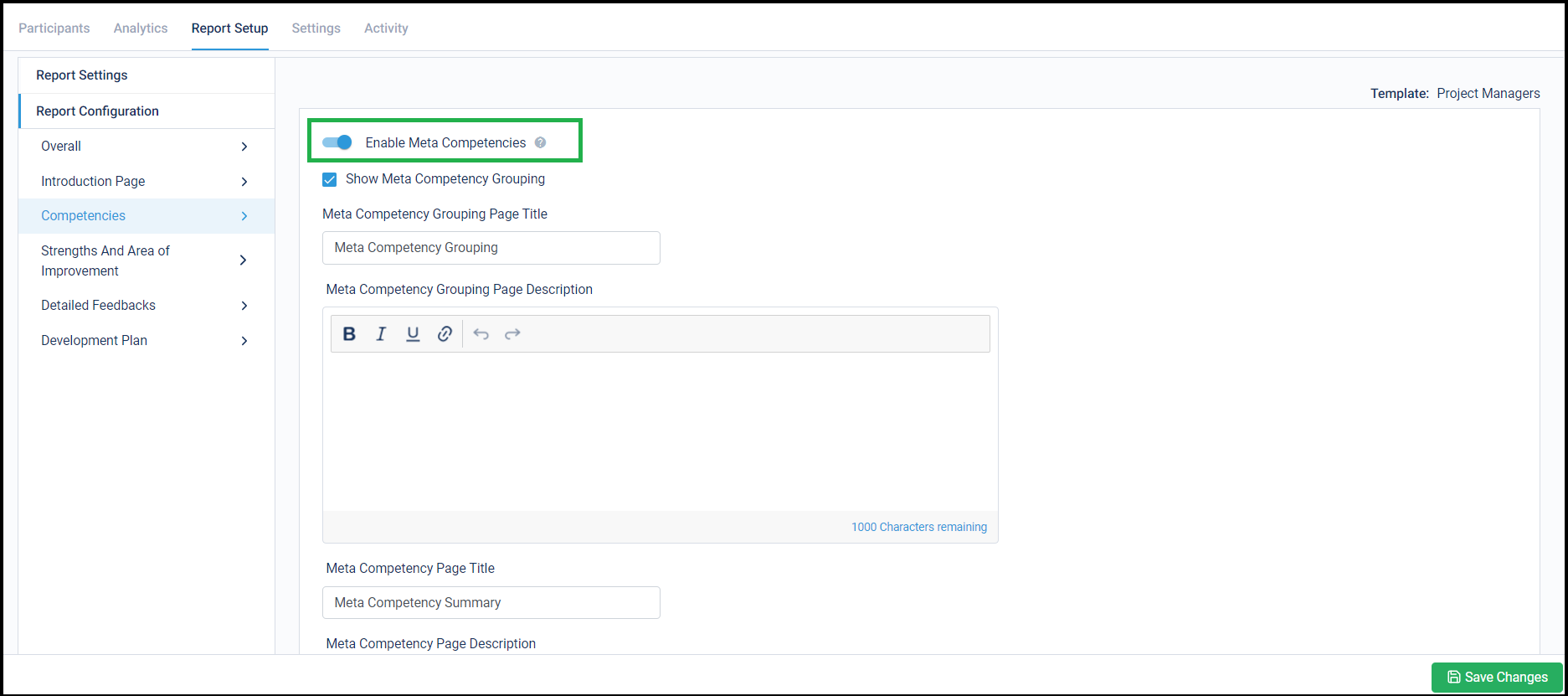

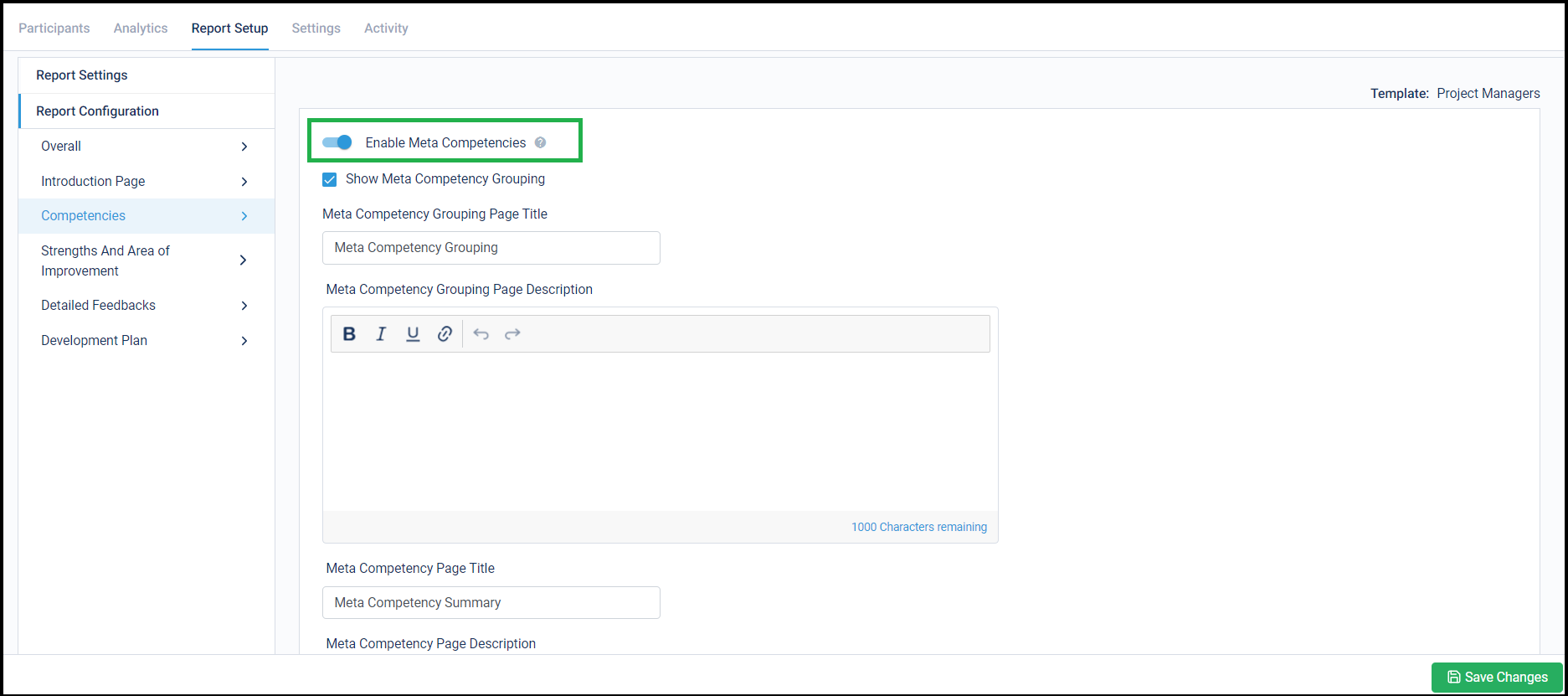

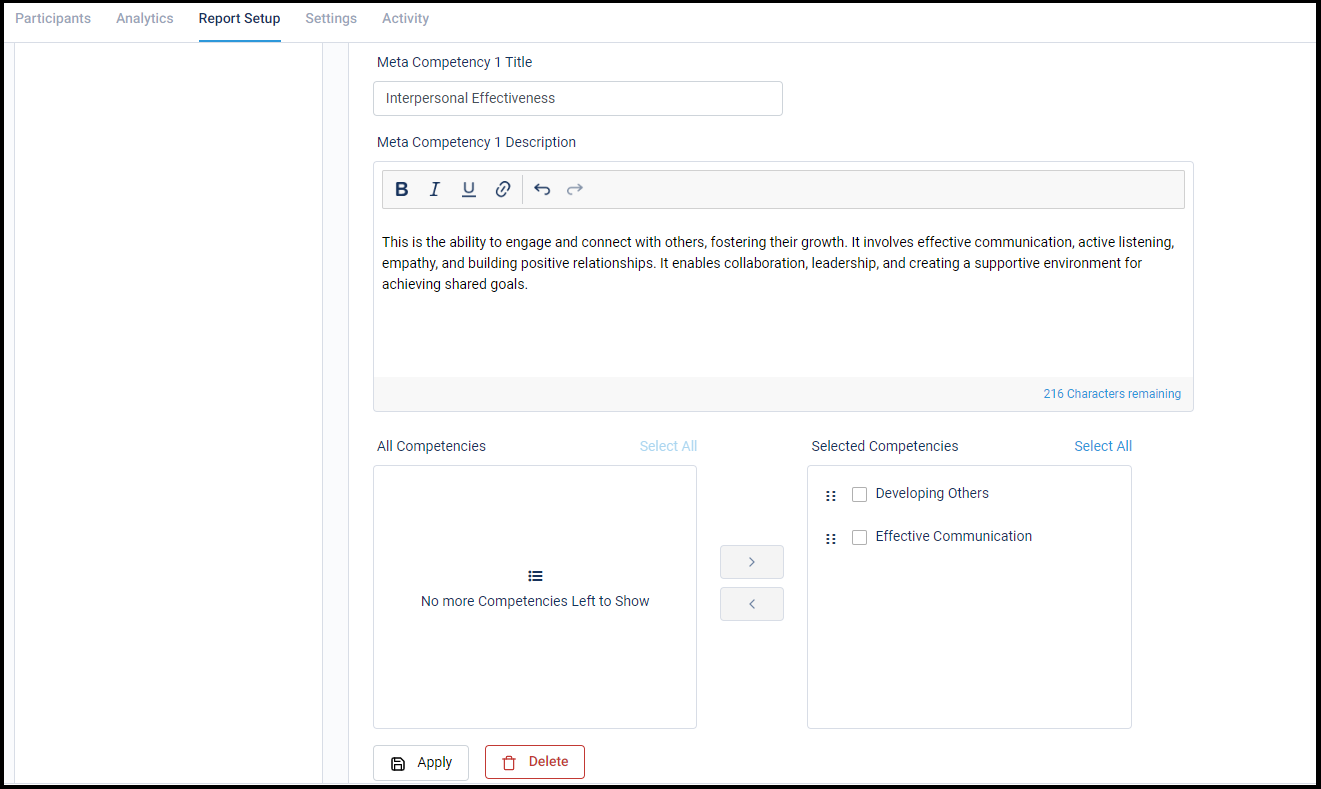

Below is where the changes can be made under Report Configuration:

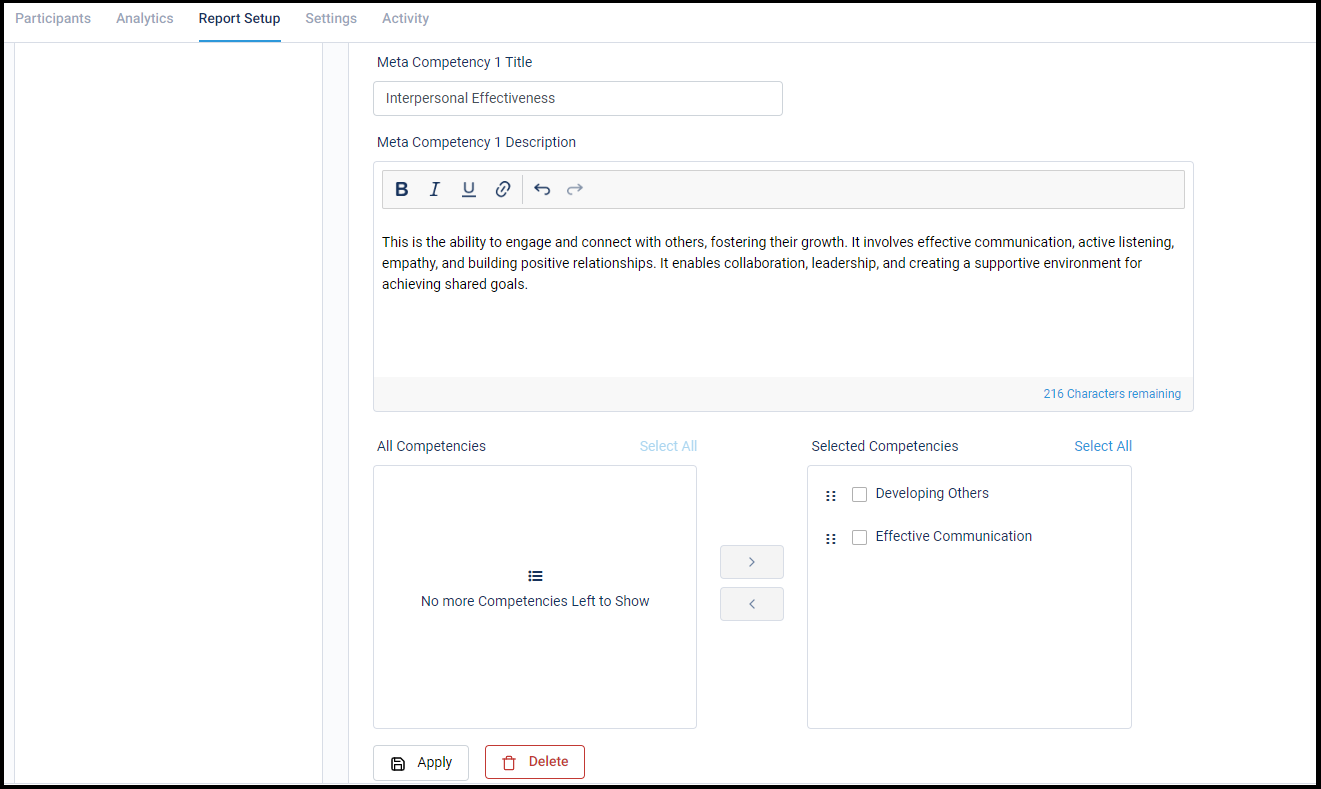

Competencies can be moved to newly created Meta Competencies from here:

Stay tuned for more amazing updates coming your way!

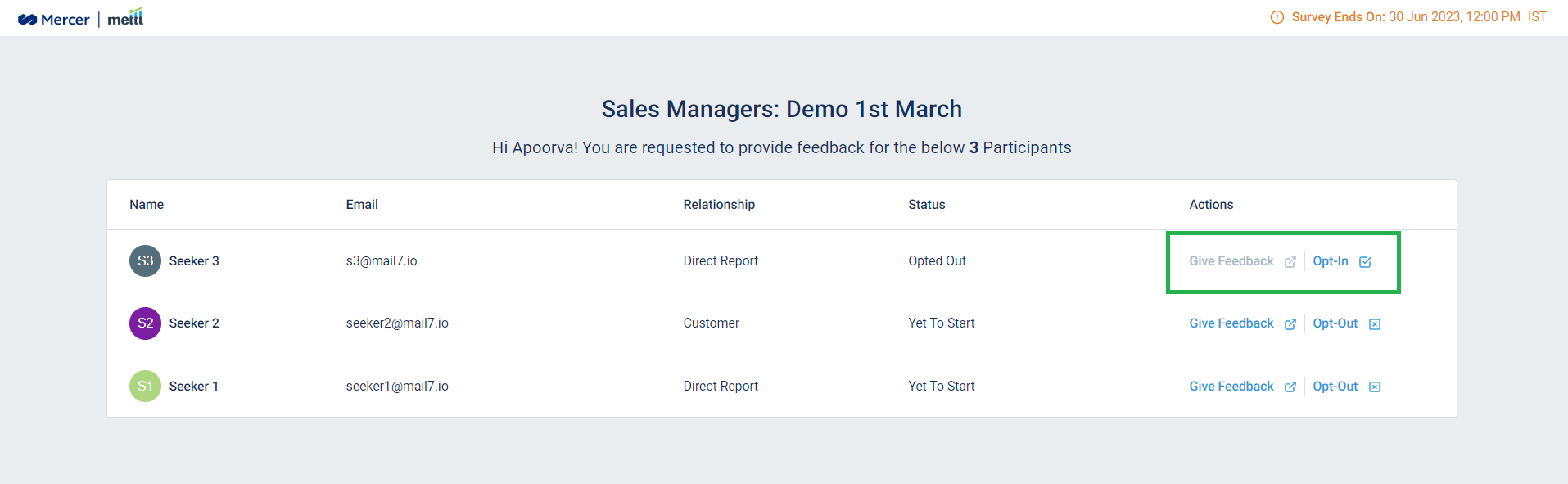

Feedback Providers can now choose to not fill response for a Feedback Seeker!

For large organizations, the HR might not have a complete Seeker<>Provider mapping to run the survey. Also, in some cultures it is considered rude to not invite certain Feedback Providers to fill the survey for some Feedback Seeker (making a Provider’s Feedback Seeker list really huge!).

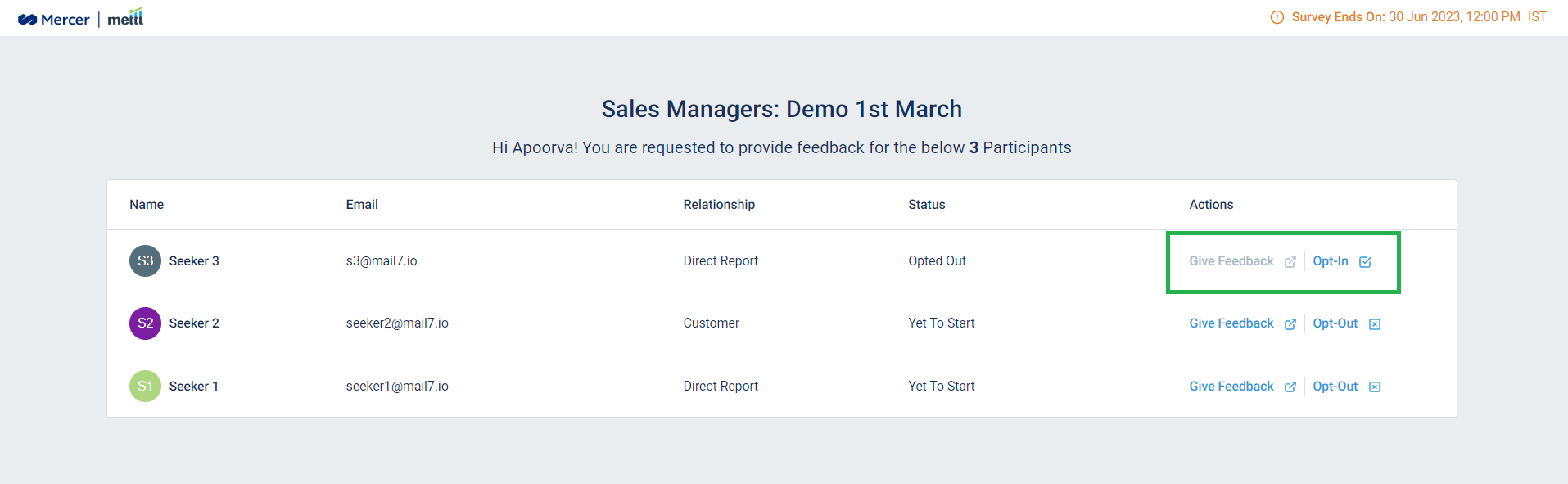

Now, Feedback Providers can choose to not fill the response for a Feedback Seeker in case they do not have the apt working experience or aren’t knowledgeable enough to provide ratings to a Seeker. Once opted-out, Providers are not required to fill the survey for that Seeker.

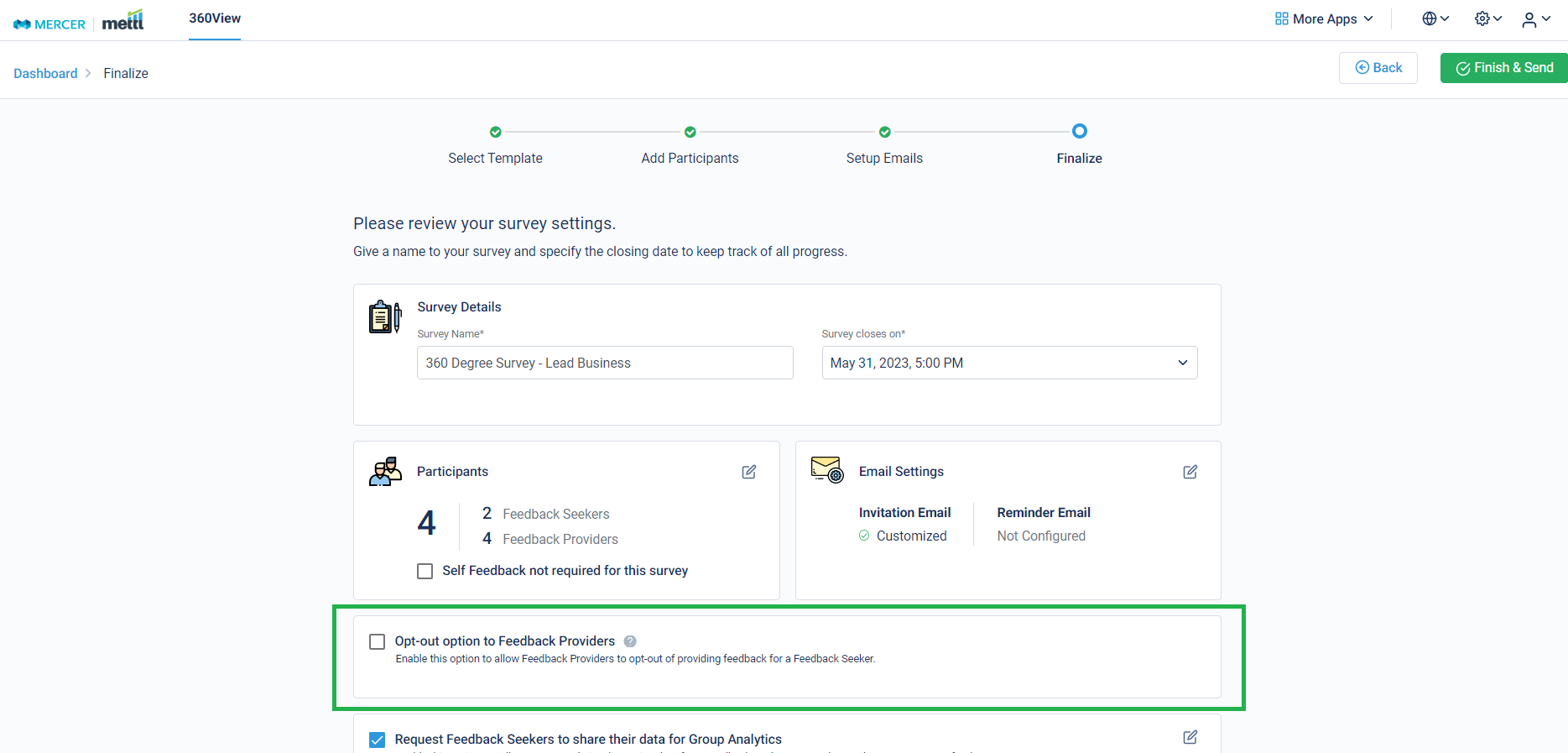

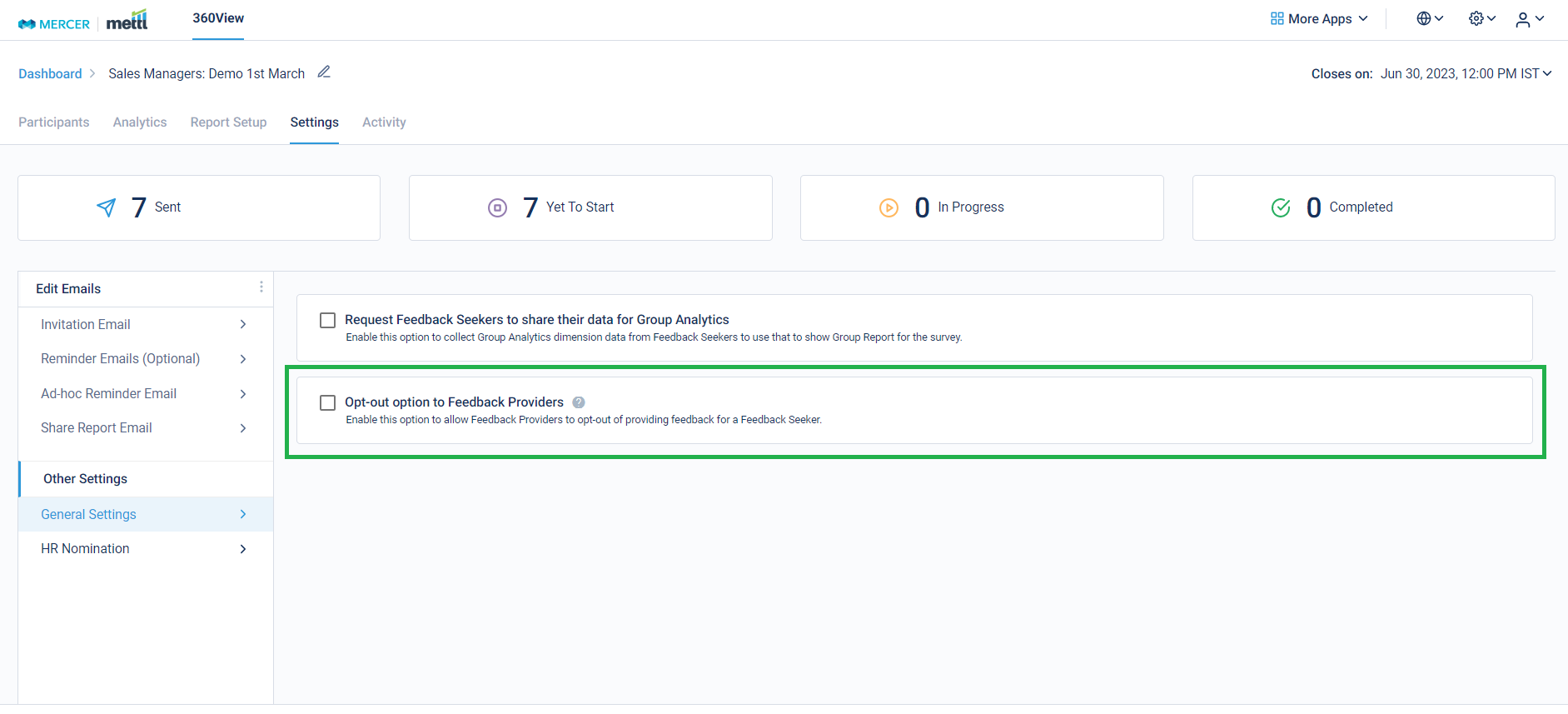

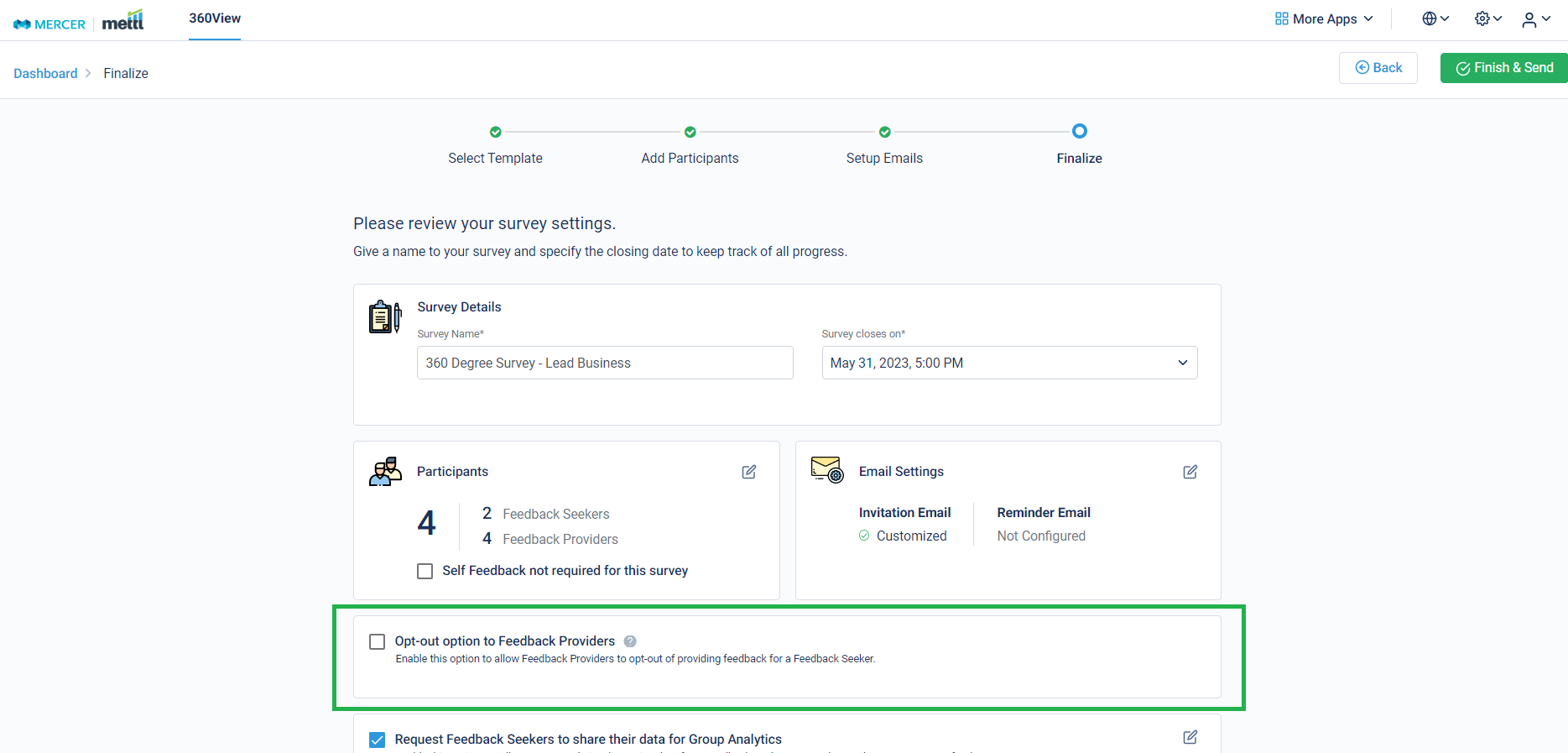

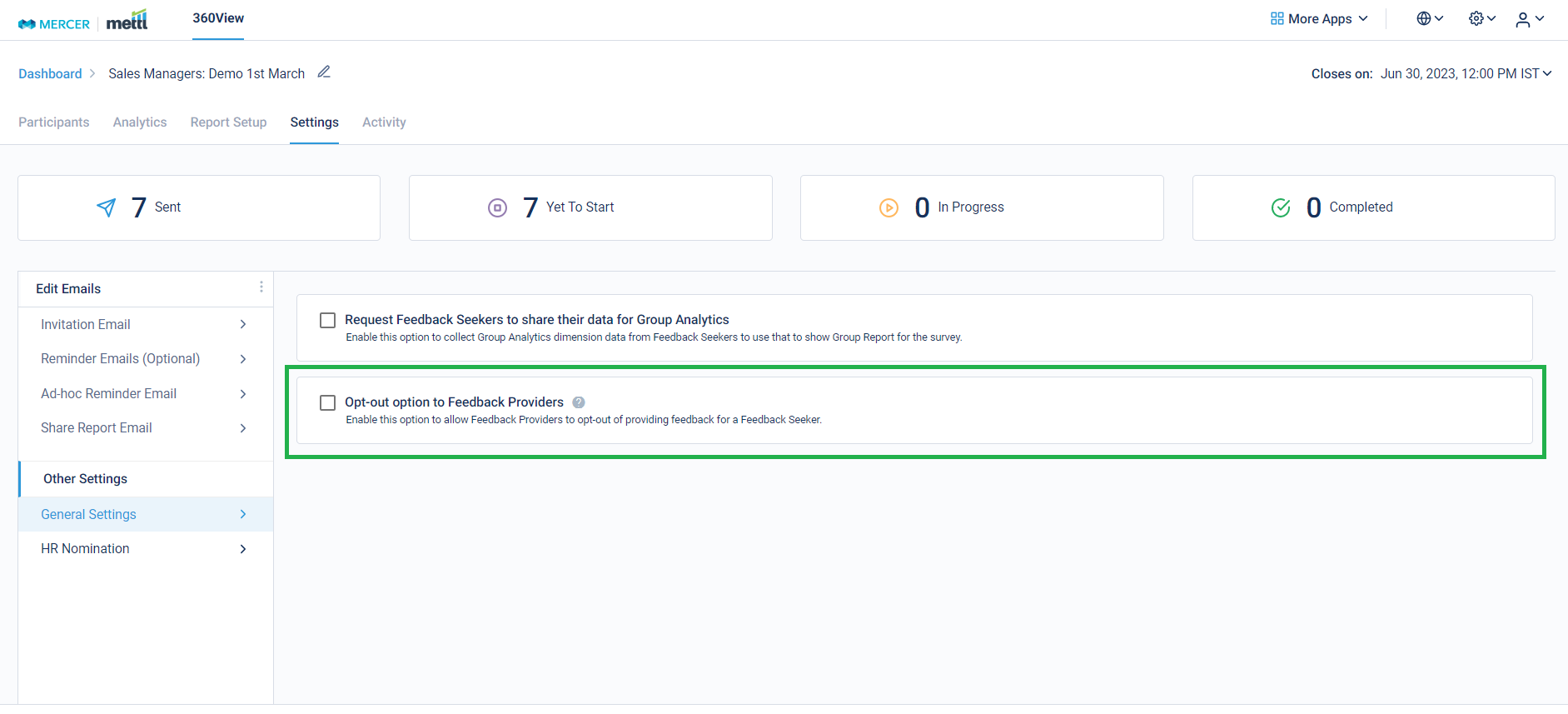

The setting for the same can be initialized either at the ‘Finalize’ step of launching the survey, or after the survey has been launched.

‘Finalize’ Step:

Survey running page:

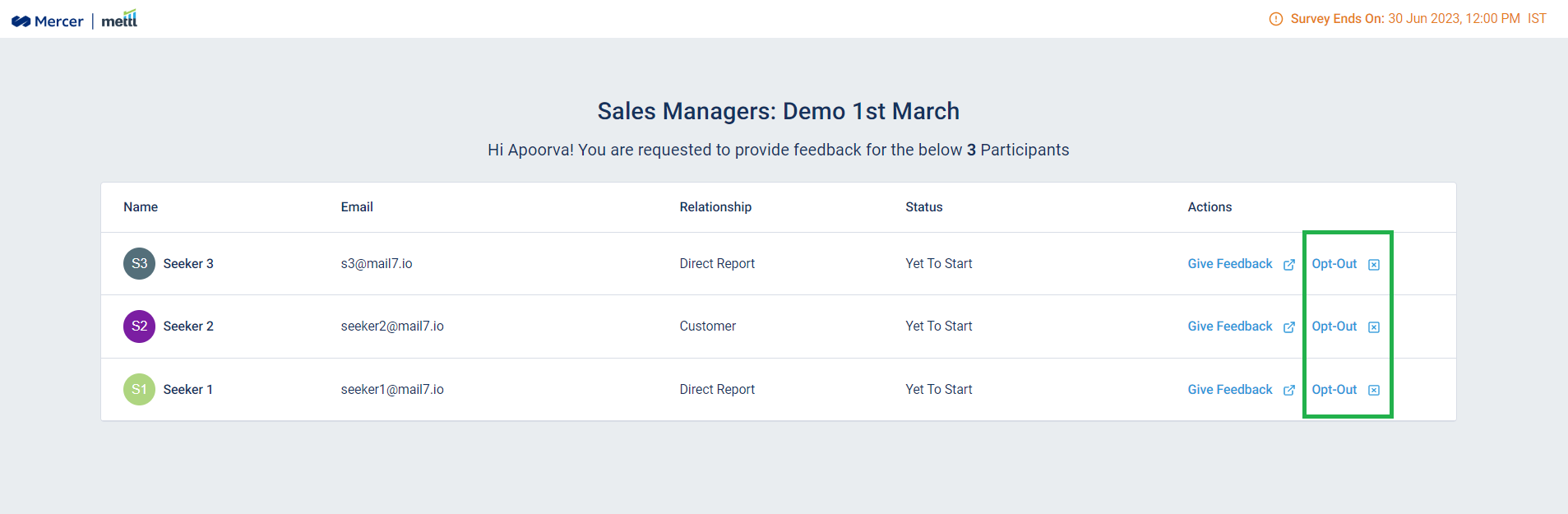

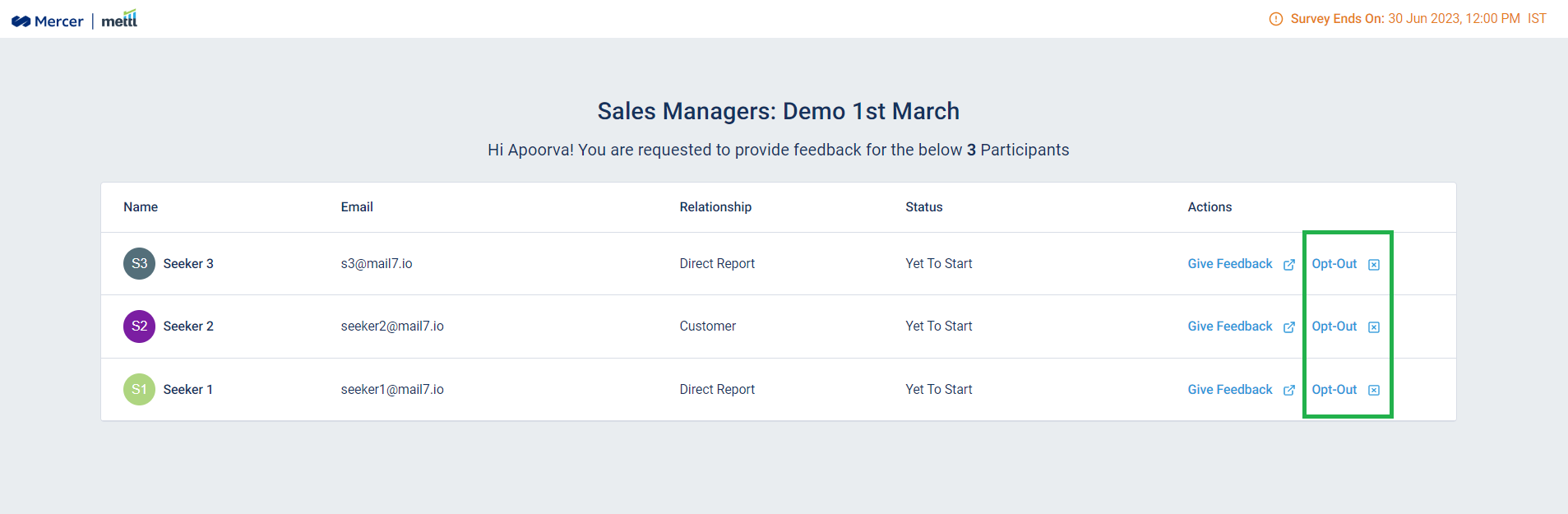

After enabling this option, this is how it’ll appear at the Respondent Page-

Before opting out:

After opting out:

Stay tuned for more amazing updates coming your way!

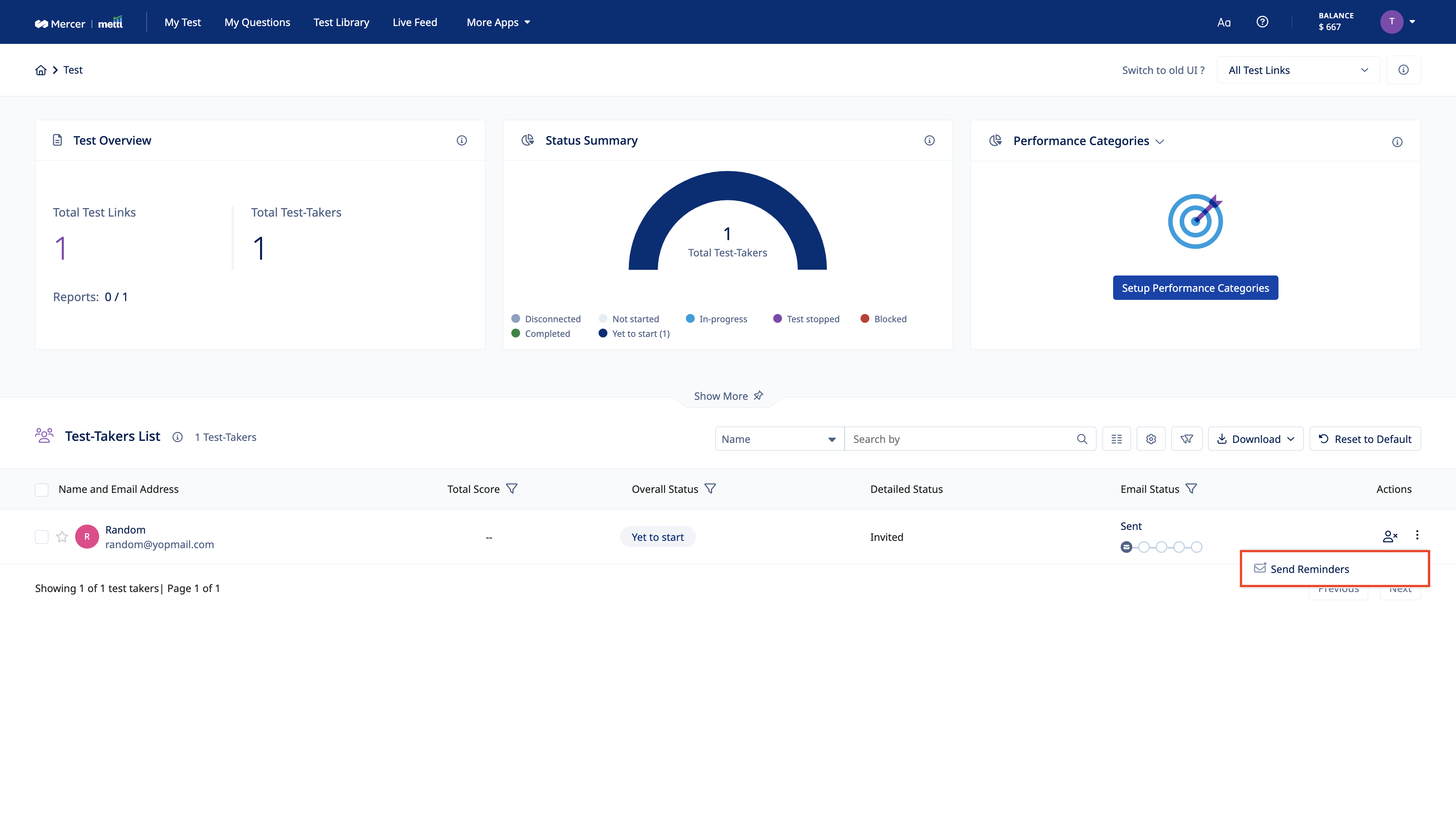

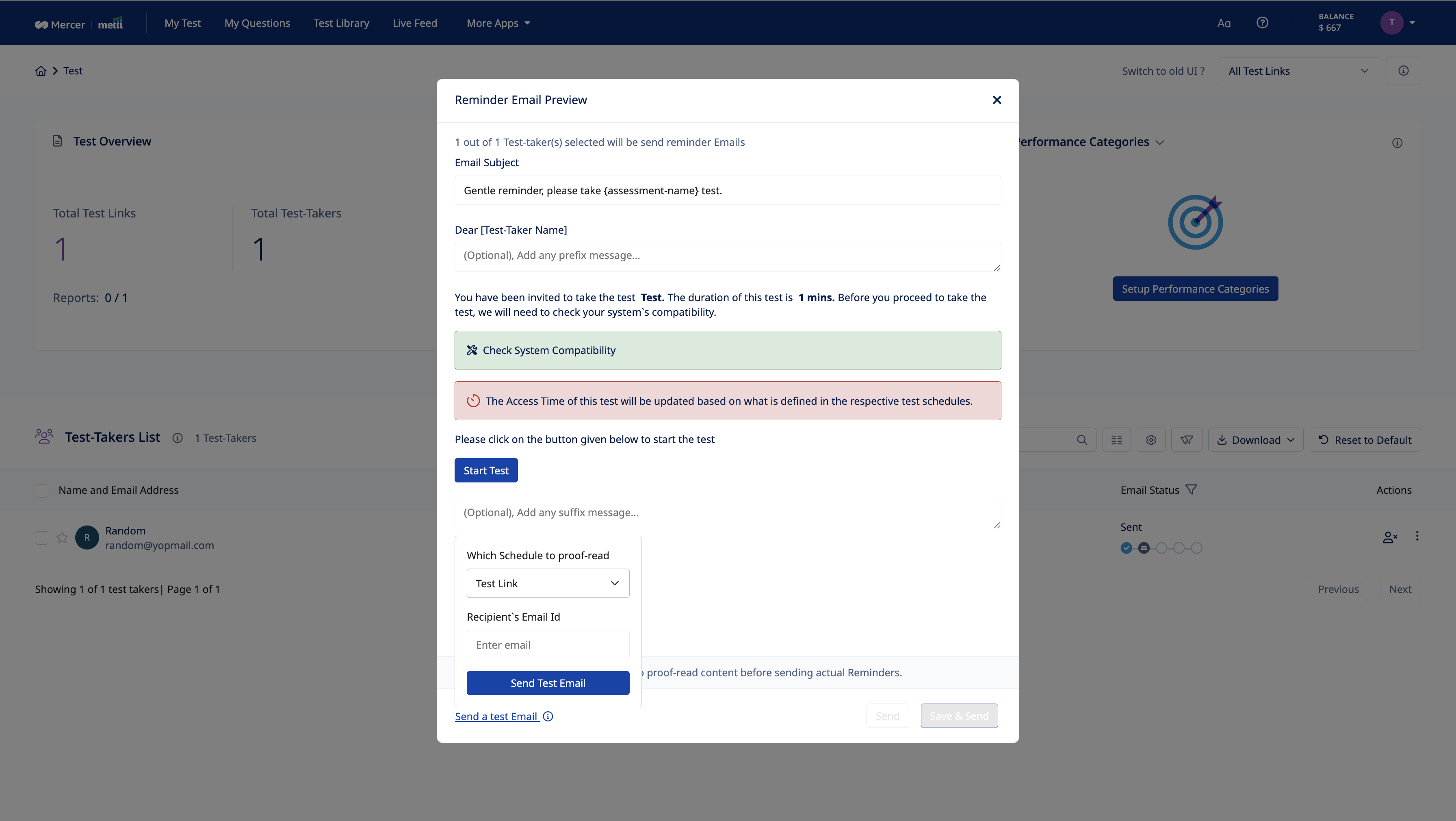

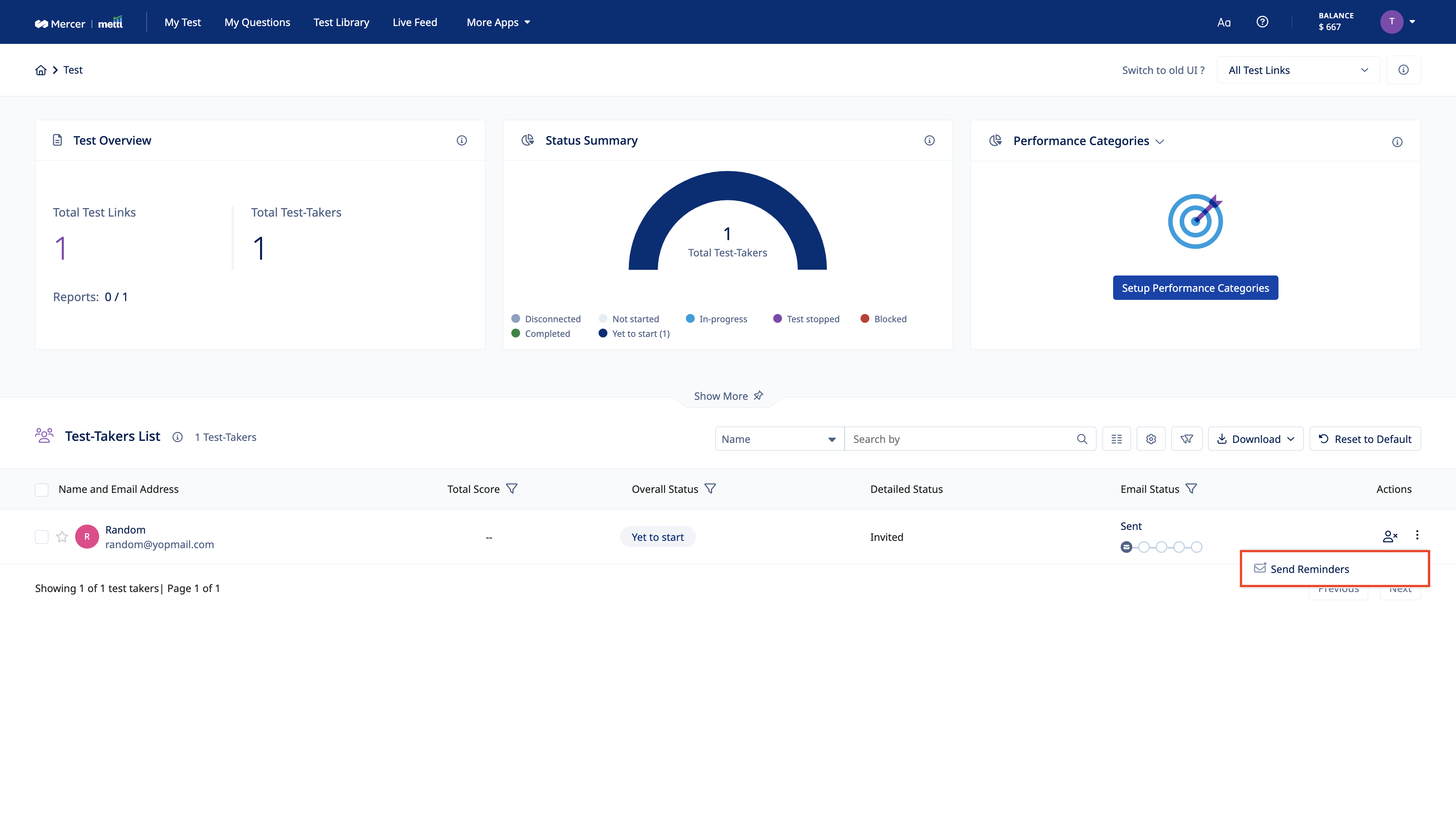

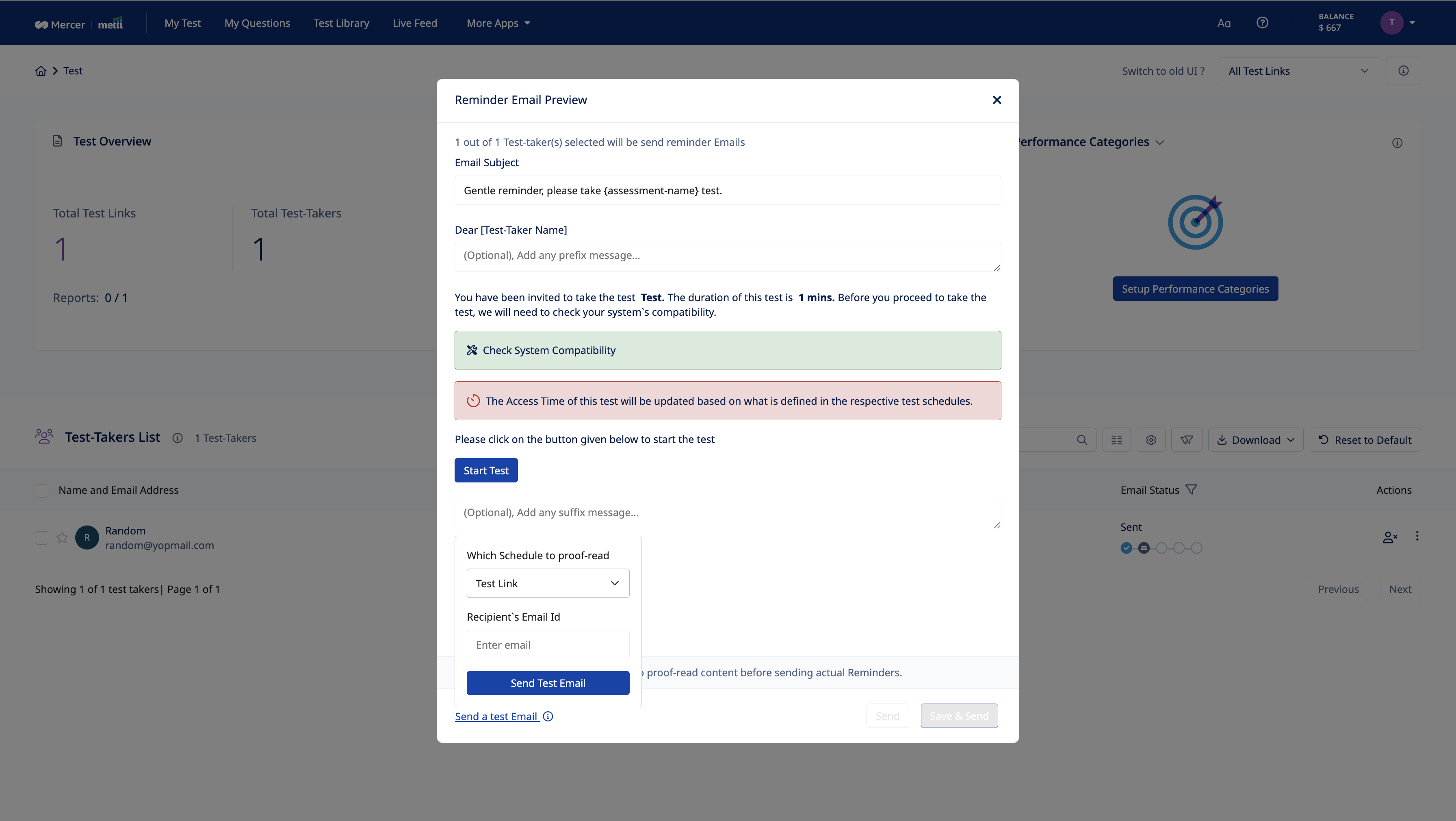

The main objective of this feature is to facilitate the client to send reminder emails to the test takers who were invited to take the test but haven’t even registered for the test. Important changes that have been done are:

- Introduction of “Test Email”. The user has to mandatorily send a test email before sending the actual reminders once any changes have been made in the email template.

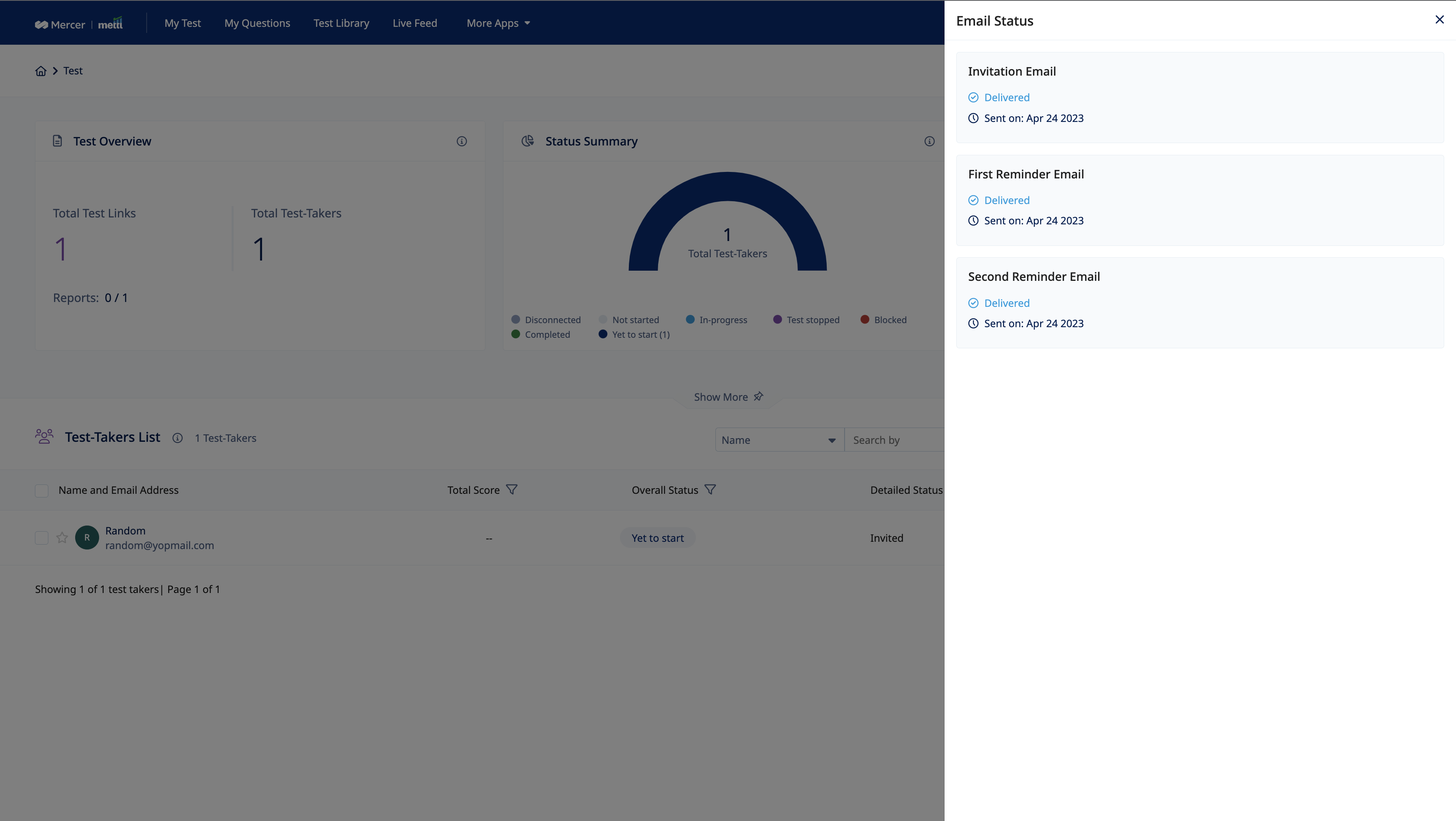

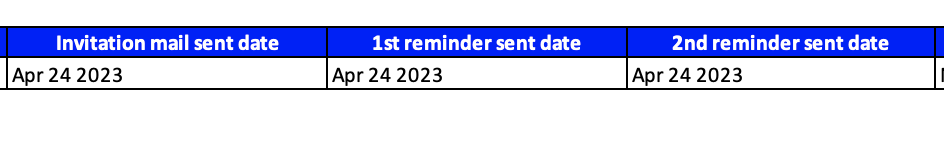

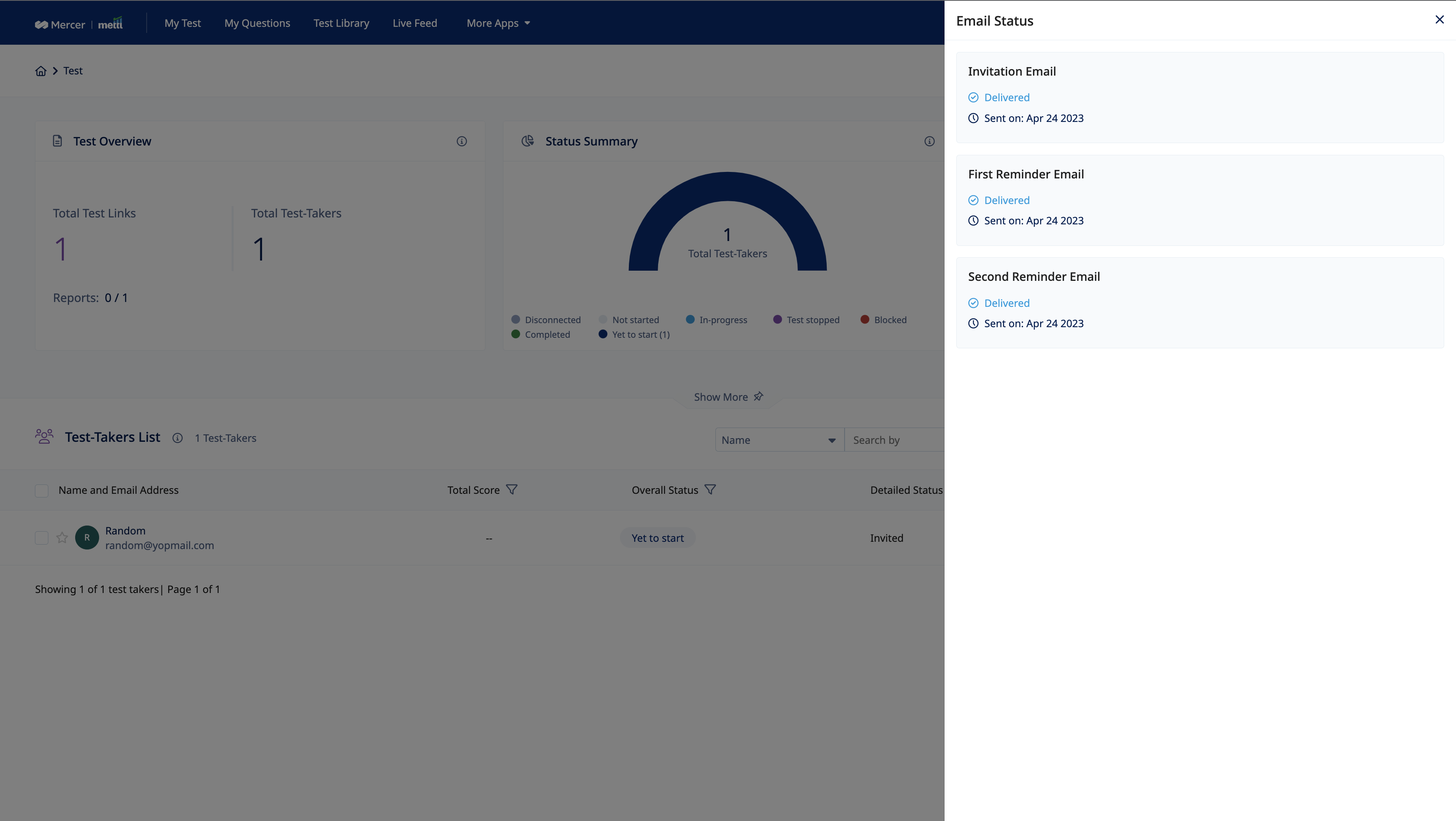

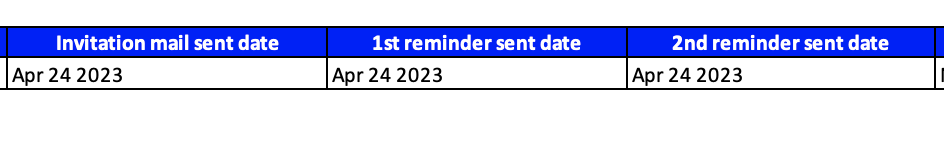

- Maintaining history of when any reminder email(s) sent to any test taker. Changes are done both in the User interface and the Excel downloaded from Yet to start tab.

Now any test taker in the Yet to Start status on the results page, can be sent a reminder. The maximum limit for now is 4. That means any test taker can be sent 4 reminder emails at max with one invitation email already sent.

Note: Even if the reminder email is not delivered/bounced due to the recipient test taker, it would still be counted as an attempt

Log history of reminders sent would also be available to keep track of which invited test taker has been sent how many reminder emails.

Excel getting downloaded for the Yet to Start test takers status, the date of reminder emails sent would be have addition data.

Download Manger

A single place allowing users to track download requests. the latest status of the request and download documents can be viewed here.

- Results Dashboard

- Report+ page

- Test-taker bulk data download

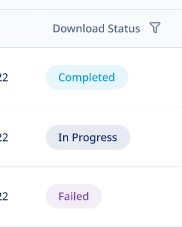

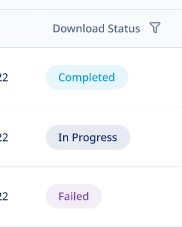

The latest status of requests like inprogress, completed or failed can be viewed here. Files can directly be downloaded from this page for completed requests

The latest status of requests like inprogress, completed or failed can be viewed here. Files can directly be downloaded from this page for completed requests

- Inprogress – The download has begun and request is being processed

- Completed – The files have been generated and can be downloaded

- Failed – The request has failed. You can re-trigger the request from actions column

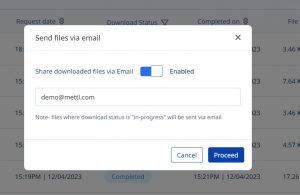

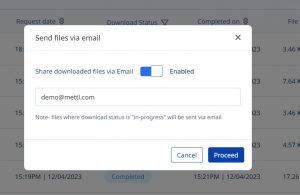

Emails can be enabled in one click. Files will be available via emails in addition to download manager page. Mails will be sent for requests that are successfully completed as well as for requests that have failed

Along with latest status, the table also gives details about the requested date, completed date, file size, source page from which file is downloaded and users details (admin only) who have raised downloaded requests on the platform

Behavioral Competencies

Behavioral Competencies Cognitive Competencies

Cognitive Competencies Coding Competencies

Coding Competencies Domain Competencies

Domain Competencies

The latest status of requests like inprogress, completed or failed can be viewed here. Files can directly be downloaded from this page for completed requests

The latest status of requests like inprogress, completed or failed can be viewed here. Files can directly be downloaded from this page for completed requests